At the 2010 Electronic Entertainment Expo in Los Angeles, Calif., Nintendo revealed a long-rumored product that created massive traffic jams and lines on the expo floor. It was the Nintendo 3DS, a handheld device with a form factor similar to the DS and DS Lite models that came before it. But this new handheld gaming gadget boasted a feature that left its predecessors behind: 3-D.

But that wasn't all. Nintendo also revealed that the technology the company used in the 3DS meant that players wouldn't have to wear glasses to see the 3-D effect. On top of that, they showed off a feature that allowed the user to adjust the depth of field on the device.

Advertisement

The announcement made quite a stir at the Nintendo booth in the exhibition hall. People lined up for hours just to get a few minutes with the device. Nintendo tethered each 3DS to its booth and each system had its own dedicated spokesperson dressed all in white at the ready to answer questions.

One question that was asked but not answered in detail was, "When is it coming out?" It would take nearly a year before the 3DS launched worldwide. But in March 2011, gamers around the world got their first chance to purchase a 3DS system of their very own.

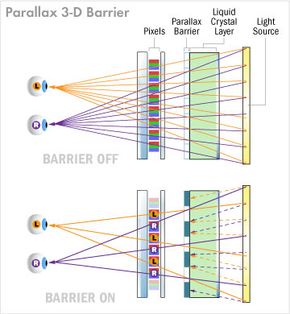

So how does this new device work? What makes it different from the DS units that preceded it? And just how can you see 3-D images on a screen without wearing glasses? We're going to answer all those questions and show you exactly what's running the show inside the Nintendo 3DS.

Advertisement