You're wandering the streets of London for the first time, soaking up the sights and sounds all around you, when you and a friend stumble upon a gigantic tower with a bell near the top. You both know the name of this famous bell, but neither of you can remember what it is.

To solve this mystery, you whip out your smartphone and begin guessing at Internet search keyword combinations that may (or may not) help you deduce the bell's name. Meanwhile, your friend simply points her phone's camera at the clock tower, snaps a picture and moments later, she has the answer: The bell's name is Big Ben.

Advertisement

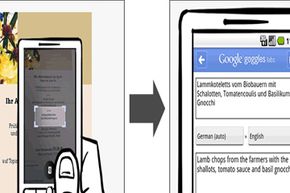

To find the answer, she simply loaded a smartphone app called Google Goggles. Goggles is an Internet search feature that bypasses keywords for camera snapshots instead. In short, it's a type of visual search. Snap a picture and then let Google's algorithms do the brainstorming to figure out whatever it is that you see.

Google eventually wants the app to be a universal visual search tool. But Goggles is still in development (a fact that Google stresses), and identifying all sorts of stuff by snapshot alone is a major challenge.

For now, Google emphasizes that Goggles works its magic best on iconic sights, such as landmarks, book covers, bar codes, wine bottle labels, corporate logos and artwork. But the company is working on increasing the accuracy of Goggles. Keep reading to learn more about how Goggles might help you see -- and search -- your world with a fresh set of eyes.

Advertisement